Pasadena’s Economic Development and Technology Committee on Tuesday will receive a report on specific ways artificial intelligence is already being used inside City Hall, describing concrete pilot programs aimed at reducing staff workload, improving cybersecurity, and filtering public submissions, while stressing that the technology remains tightly controlled and human-supervised.

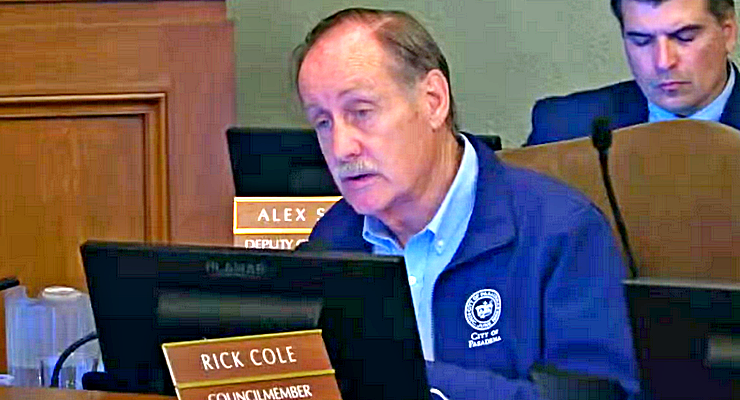

The item is an informational item and no vote is required. The presentation was delivered by the Department of Information Technology.

According to the materials, Pasadena is currently using AI in limited, defined applications rather than for automated decision-making.

All AI tools operate in an advisory role and require staff review before any output is finalized or published.

During the report, City staff will outline several active pilots and prototypes. One involves AI-assisted document drafting and summarization, which is used to help staff prepare and edit written materials.

Another tool focuses on accessibility, assisting departments in producing public content that meets Americans with Disabilities Act requirements.

The city is also using AI to address operational bottlenecks. An automated system screens online public forms to filter out spam submissions that previously bypassed standard filters, reducing staff time spent sorting invalid requests and improving response reliability.

In the area of cybersecurity, AI-powered adaptive firewalls are being used to detect and block emerging threats in real time, allowing systems to respond to new attack patterns without manual rule updates.

Informational chatbots are also in use, providing residents and staff with general city information rather than personalized or case-specific responses.

The presentation stressed that no resident personally identifiable information is used in current AI systems.

All AI-generated content must be reviewed and approved by city employees, and vendors are required to comply with state privacy laws, including the California Privacy Rights Act. Additional safeguards include audit trails, documentation requirements and risk-tiering for each AI use case.

The department will also outline a governance framework built around equity, transparency, privacy, trust and human oversight. Materials state that AI is deployed only when it addresses a specific, measurable operational need.

Next steps outlined in the presentation include forming a cross-functional AI risk governance committee, updating procurement language to address AI-specific contracts, continuing staff training on validation and bias awareness, and establishing a data task force to guide responsible data use across departments.